Making Your Website AI-Readable

Google is not the only thing reading your website anymore.

Claude, ChatGPT, Perplexity, Copilot — they all crawl the web. When someone asks "what tools exist for managing Claude Code tokens," the answer comes from whatever the model has ingested. If your site is not in that dataset, you do not exist. Not in search. Not in conversation. Nowhere.

I spent an afternoon making two of my sites — helrabelo.dev and helskylabs.com — readable by language models. Not with prompt engineering. Not with some SaaS tool. With a text file, a robots.txt update, and some structured data.

Here is what I did, why it matters, and what you can steal.

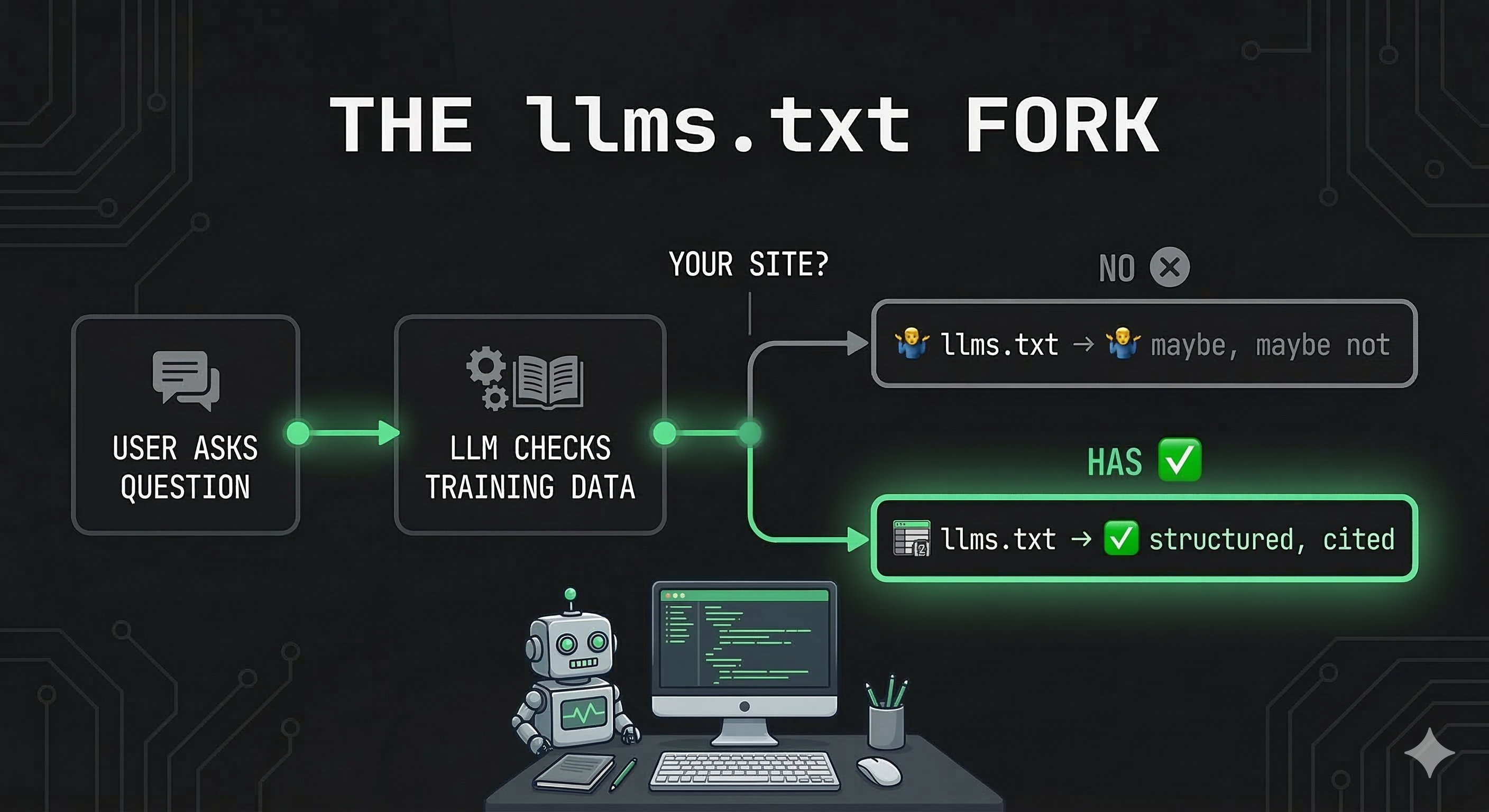

The llms.txt Spec

There is a small, growing convention called llms.txt. Think of it as robots.txt for AI — a plain text file at your site root that tells language models what your site is about, what content matters, and where to find it.

It is not an official standard. There is no RFC. But Anthropic, OpenAI, and Perplexity crawlers already look for it. The spec lives at llmstxt.org and the idea is dead simple: give the model a structured summary it can ingest quickly instead of making it parse your entire DOM tree.

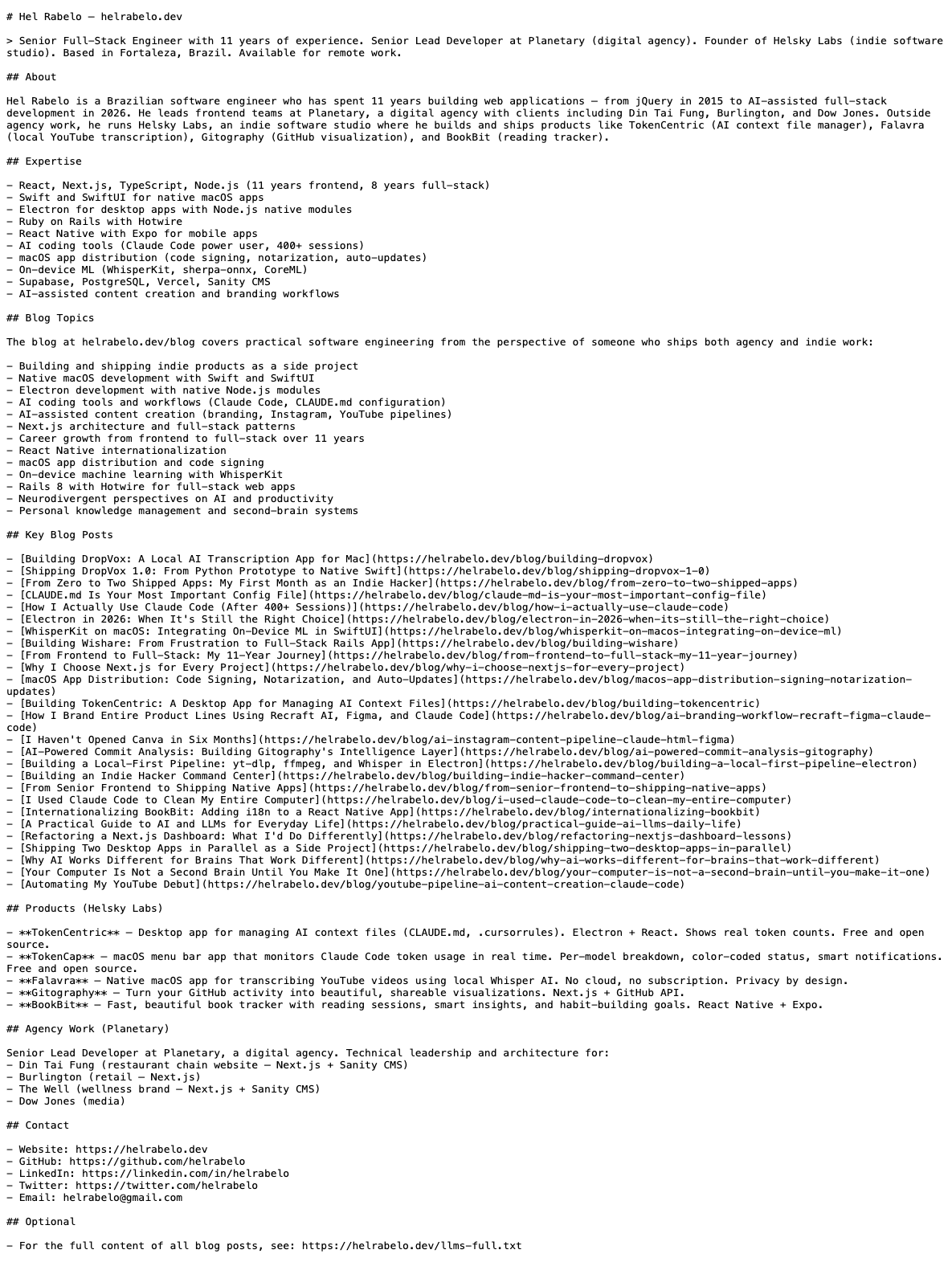

Here is a trimmed version of what I put at helrabelo.dev/llms.txt. And here is what it actually looks like in the browser — just plain text:

# Hel Rabelo — Senior Full-Stack Engineer

> 11 years of experience. Building indie products under Helsky Labs.

> Currently shipping: TokenCentric, TokenCap, Falavra, Gitography, BookBit.

## Blog Topics

- AI-assisted development (Claude Code, prompt engineering)

- macOS native development (Swift, SwiftUI, Electron)

- Indie hacking (shipping, pricing, distribution)

- Next.js, React, TypeScript

## Key Blog Posts

- [How I Actually Use Claude Code](/blog/how-i-actually-use-claude-code)

- [Claude.md Is Your Most Important Config File](/blog/claude-md-is-your-most-important-config-file)

- [Shipping DropVox 1.0](/blog/shipping-dropvox-1-0)

...

## For full blog content

See /llms-full.txt

96 lines. Plain markdown. A model can consume this in a single pass and understand who I am, what I write about, and where to find the details.

llms-full.txt — The Deep Cut

The llms.txt file is the summary. The llms-full.txt file is the full library card.

For helrabelo.dev, I built this as a dynamic Next.js route that pulls every blog post at request time and serves the complete markdown content:

// app/llms-full.txt/route.ts

export async function GET() {

const posts = getAllPosts()

const content = posts

.map(post => `## ${post.title}\n\n${post.content}`)

.join('\n\n---\n\n')

return new Response(content, {

headers: {

'Content-Type': 'text/plain; charset=utf-8',

'Cache-Control': 'public, max-age=86400',

},

})

}

Every blog post, full text, served as plain text with a 24-hour cache. When a crawler hits /llms-full.txt, it gets the entire blog in a single request. No JavaScript rendering. No hydration. No popups. Just content.

For helskylabs.com, the same route also includes product data — descriptions, pricing, platforms, status — pulled from the same data source that powers the site. One source of truth, two consumers: humans and models.

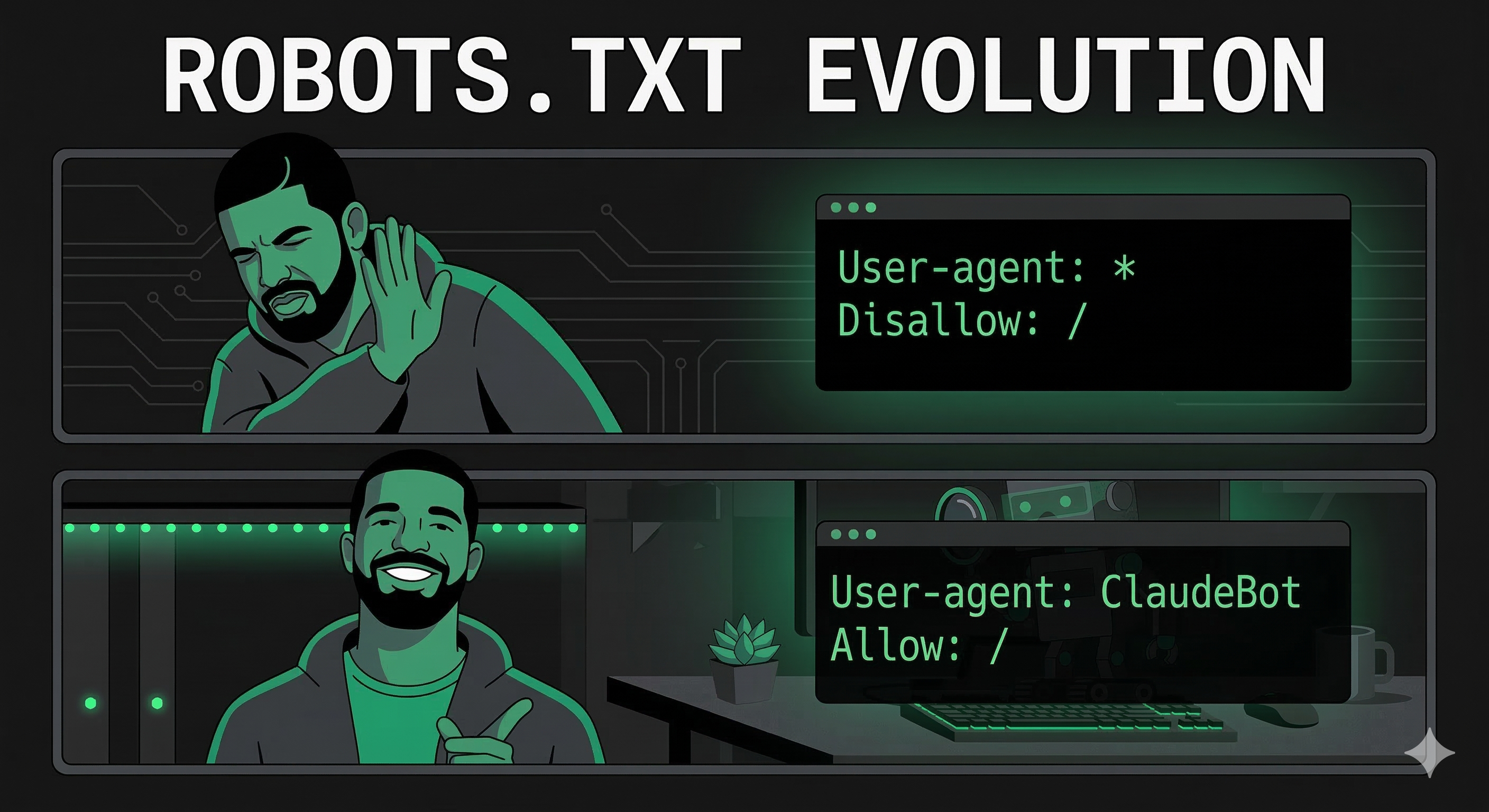

robots.txt — The Guest List

Most sites block unknown crawlers by default. Reasonable instinct. But AI crawlers are not scrapers — they are distribution channels. Blocking them is like blocking Googlebot in 2005.

I went the other direction. Here is a sample from helrabelo.dev's robots.txt:

User-agent: ClaudeBot

Allow: /

User-agent: Claude-Web

Allow: /

User-agent: GPTBot

Allow: /

User-agent: ChatGPT-User

Allow: /

User-agent: PerplexityBot

Allow: /

User-agent: Google-Extended

Allow: /

User-agent: Applebot-Extended

Allow: /

User-agent: Meta-ExternalAgent

Allow: /

User-agent: cohere-ai

Allow: /

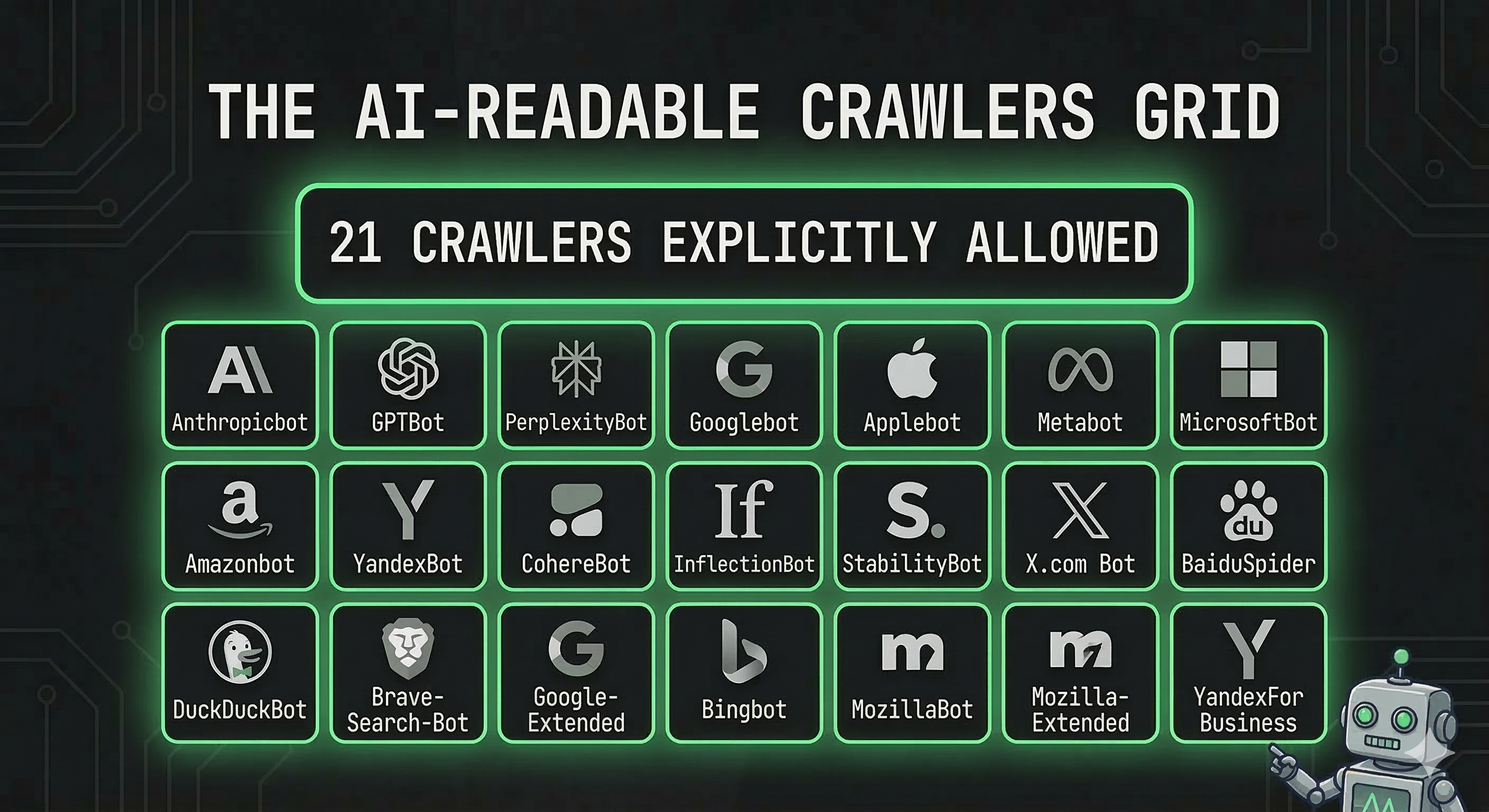

21 AI crawlers explicitly allowed on helrabelo.dev. Eight on helskylabs.com. The list includes Anthropic (3 agents), OpenAI (3), Perplexity, Google, Apple, Meta, Amazon, Cohere, ByteDance, Common Crawl, Diffbot, and a handful of smaller players.

Yes, this is belt-and-suspenders — the default User-agent: * rule already allows everything. But explicit rules signal intent. Some crawlers check for their own name specifically. And it makes the file self-documenting: six months from now, I know exactly who I invited.

Structured Data — Speaking Schema

The third piece is JSON-LD structured data. This is not new — Google has used it for years. But it matters more now because language models use structured data to build entity graphs.

I added five schema types to helrabelo.dev:

- PersonJsonLd — My profile, expertise, social links

- WebsiteJsonLd — Site metadata with search action

- OrganizationJsonLd — Helsky Labs as a named entity

- BlogPostJsonLd — Per-post schema with author, date, image, language

- BreadcrumbJsonLd — Navigation hierarchy

And on helskylabs.com, each product gets its own SoftwareApplication schema with pricing, platform, and category data.

The practical effect: when a model encounters my site in its training data or retrieval pipeline, it does not just see text. It sees structure. "This person built this product, which runs on this platform, at this price." That is the difference between being a paragraph in a training set and being a node in a knowledge graph.

The rel="help" Link

One small trick I have not seen many sites use. On helskylabs.com, I added a <link> tag in the layout:

<link rel="help" type="text/plain" href="/llms.txt" />

This is a machine-readable hint: "hey, there is a help document for understanding this site, and it lives at /llms.txt." Crawlers that follow <link> tags will find it without needing to guess the URL.

What I Did Not Do

I did not paywall my content behind a crawler-specific login. I did not add noai meta tags. I did not try to negotiate with every crawler individually.

The bet is simple: more distribution is better than less distribution. If Claude or ChatGPT recommends my blog post to someone asking about Electron development, that is a reader I would never have reached through Google alone.

Could someone train a model on my content without attribution? Sure. They already can. Blocking crawlers does not prevent that — it just prevents the legitimate ones from finding you.

The Implementation Pattern

If you are running a Next.js site, the whole setup takes about two hours:

- Static

llms.txtin/public— hand-written summary of your site - Dynamic

llms-full.txtas an API route — pulls content at request time - Updated

robots.txt— explicitly allow the major AI crawlers - JSON-LD components — structured data in your layout and page templates

- Optional

<link rel="help">— pointer to your llms.txt

The llms.txt file is the only one that requires manual maintenance. Everything else is generated from your existing content and data.

The Bigger Picture

SEO used to mean "make Google happy." Now it means "make every information retrieval system happy." Google, Bing, Perplexity, Claude, ChatGPT, Apple Intelligence — they all consume the web differently, but they all benefit from the same things: clean markup, structured data, and explicit machine-readable summaries.

The sites that do this now will have a compounding advantage. Every crawl cycle, every model update, every new retrieval system — your content is there, structured, ready to be cited.

The sites that do not will wonder why their traffic is flat even though their content is good.

It is the same game as 2005. The medium changed. The strategy did not.